[ad_1]

Google’s John Mueller was requested in an search engine marketing Workplace Hours podcast if blocking the crawl of a webpage may have the impact of cancelling the “linking energy” of both inside or exterior hyperlinks. His reply instructed an surprising method of wanting on the drawback and affords an perception into how Google Search internally approaches this and different conditions.

About The Energy Of Hyperlinks

There’s some ways to think about hyperlinks however by way of inside hyperlinks, the one which Google persistently talks about is the usage of inside hyperlinks to inform Google which pages are a very powerful.

Google hasn’t come out with any patents or analysis papers currently about how they use exterior hyperlinks for rating net pages so just about the whole lot SEOs learn about exterior hyperlinks is predicated on outdated data that could be outdated by now.

What John Mueller mentioned doesn’t add something to our understanding of how Google makes use of inbound hyperlinks or inside hyperlinks however it does provide a distinct method to consider them that in my view is extra helpful than it seems to be at first look.

Affect On Hyperlinks From Blocking Indexing

The individual asking the query wished to know if blocking Google from crawling an internet web page affected how inside and inbound hyperlinks are utilized by Google.

That is the query:

“Does blocking crawl or indexing on a URL cancel the linking energy from exterior and inside hyperlinks?”

Mueller suggests discovering a solution to the query by fascinated with how a person would react to it, which is a curious reply but in addition comprises an fascinating perception.

He answered:

“I’d have a look at it like a person would. If a web page shouldn’t be accessible to them, then they wouldn’t be capable of do something with it, and so any hyperlinks on that web page can be considerably irrelevant.”

The above aligns with what we all know in regards to the relationship between crawling, indexing and hyperlinks. If Google can’t crawl a hyperlink then Google gained’t see the hyperlink and due to this fact the hyperlink may have no impact.

Key phrase Versus Person-Primarily based Perspective On Hyperlinks

Mueller’s suggestion to take a look at it how a person would have a look at it’s fascinating as a result of it’s not how most individuals would think about a hyperlink associated query. But it surely is smart as a result of in the event you block an individual from seeing an internet web page then they wouldn’t be capable of see the hyperlinks, proper?

What about for exterior hyperlinks? A protracted, very long time in the past I noticed a paid hyperlink for a printer ink web site that was on a marine biology net web page about octopus ink. Hyperlink builders on the time thought that if an internet web page had phrases in it that matched the goal web page (octopus “ink” to printer “ink”) then Google would use that hyperlink to rank the web page as a result of the hyperlink was on a “related” net web page.

As dumb as that sounds right this moment, lots of people believed in that “key phrase based mostly” strategy to understanding hyperlinks versus a user-based strategy that John Mueller is suggesting. Checked out from a user-based perspective, understanding hyperlinks turns into lots simpler and more than likely aligns higher with how Google ranks hyperlinks than the quaint keyword-based strategy.

Optimize Hyperlinks By Making Them Crawlable

Mueller continued his reply by emphasizing the significance of constructing pages discoverable with hyperlinks.

He defined:

“If you would like a web page to be simply found, ensure it’s linked to from pages which can be indexable and related inside your web site. It’s additionally effective to dam indexing of pages that you just don’t need found, that’s finally your choice, but when there’s an vital a part of your web site solely linked from the blocked web page, then it’s going to make search a lot tougher.”

About Crawl Blocking

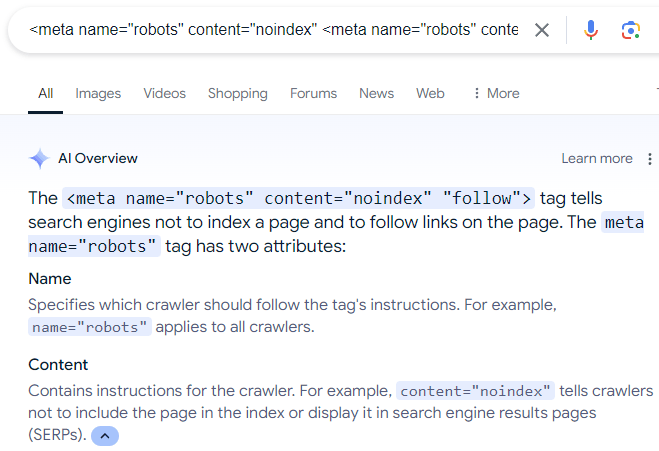

A closing phrase about blocking search engines like google from crawling net pages. A surprisingly frequent mistake that I see some web site house owners do is that they use the robots meta directive to inform Google to not index an internet web page however to crawl the hyperlinks on the net web page.

The (faulty) directive seems like this:

There may be a whole lot of misinformation on-line that recommends the above meta description, which is even mirrored in Google’s AI Overviews:

Screenshot Of AI Overviews

In fact, the above robots directive doesn’t work as a result of, as Mueller explains, if an individual (or search engine) can’t see an internet web page then the individual (or search engine) can’t comply with the hyperlinks which can be on the net web page.

Additionally, whereas there’s a “nofollow” directive rule that can be utilized to make a search engine crawler ignore hyperlinks on an internet web page, there isn’t any “comply with” directive that forces a search engine crawler to crawl all of the hyperlinks on an internet web page. Following hyperlinks is a default {that a} search engine can resolve for themselves.

Learn extra about robots meta tags.

Hearken to John Mueller reply the query from the 14:45 minute mark of the podcast:

Featured Picture by Shutterstock/ShotPrime Studio

[ad_2]